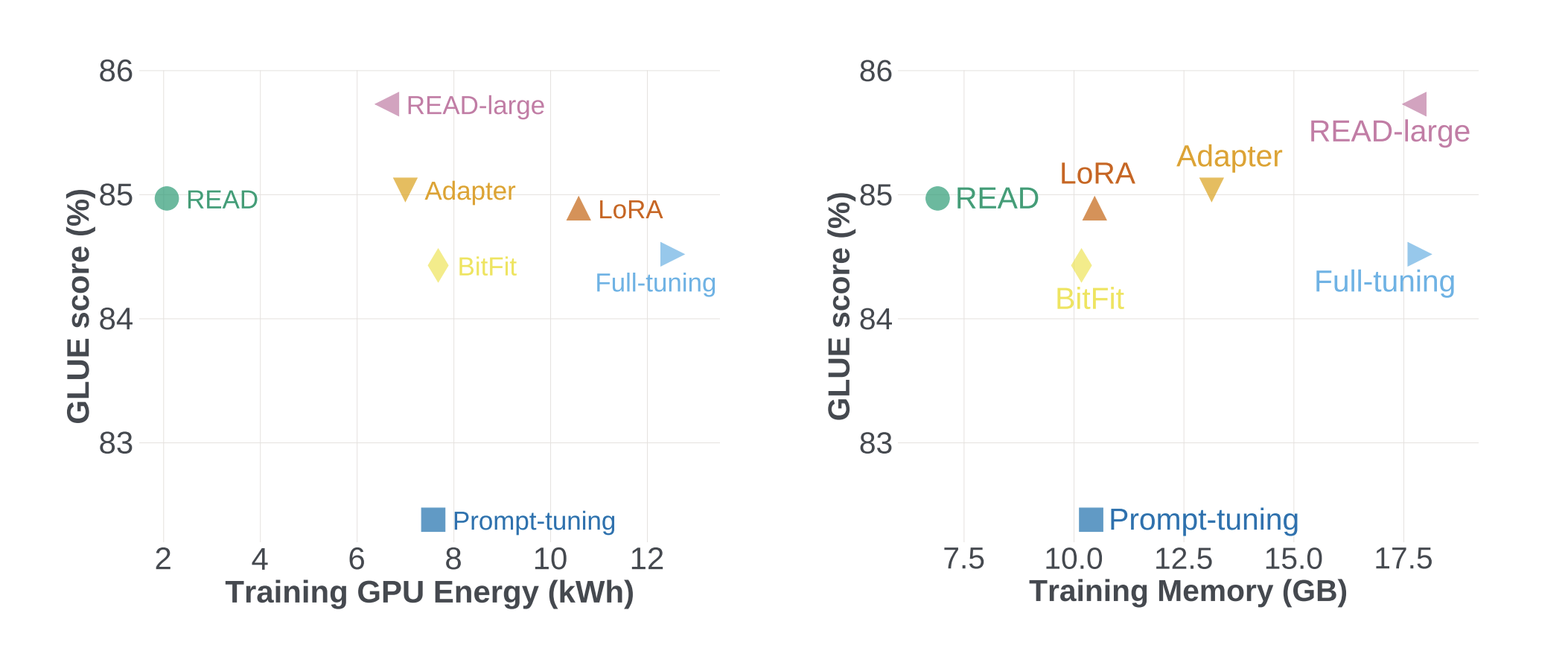

Revolutionizing AI efficiency: Meta AI’s new approach, READ, reduces memory consumption by 56% and GPU usage by 84%

https://arxiv.org/abs/2305.15348 Multiple natural language processing (NLP) tasks were completed using large-scale transformer architecture with state-of-the-art results. Large-scale models are typically pre-trained on generic web-scale data and then optimized for specific downstream goals. Several gains, including better model prediction performance and sample efficiency, have been associated with increasing the size of these models. However, the cost … Read more